|

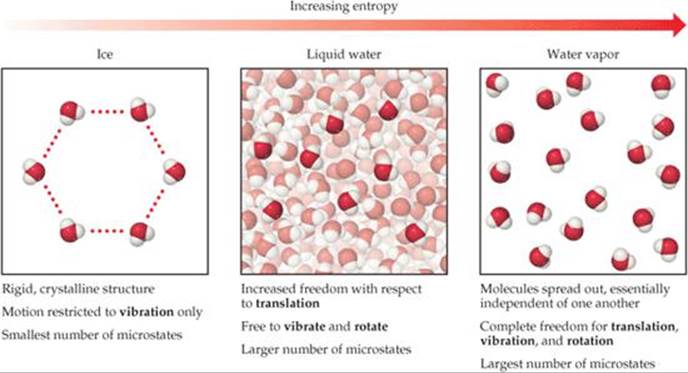

The probability that a system will exist with its components in a given distribution is proportional to the number of microstates within the distribution. Microstates with equivalent particle arrangements (not considering individual particle identities) are grouped together and are called distributions. For example, distributing four particles among two boxes will result in 2 4 = 16 different microstates as illustrated in Figure 12.8. The number of microstates possible for such a system is n N. This molecular-scale interpretation of entropy provides a link to the probability that a process will occur as illustrated in the next paragraphs.Ĭonsider the general case of a system comprised of N particles distributed among n boxes. Conversely, processes that reduce the number of microstates, W f < W i, yield a decrease in system entropy, Δ S < 0. Δ S = S f − S i = k ln W f − k ln W i = k ln W f W i Δ S = S f − S i = k ln W f − k ln W i = k ln W f W iįor processes involving an increase in the number of microstates, W f > W i, the entropy of the system increases and Δ S > 0. Note that the idea of a reversible process is a formalism required to support the development of various thermodynamic concepts no real processes are truly reversible, rather they are classified as irreversible. In thermodynamics, a reversible process is one that takes place at such a slow rate that it is always at equilibrium and its direction can be changed (it can be “reversed”) by an infinitesimally small change in some condition. This new property was expressed as the ratio of the reversible heat ( q rev) and the kelvin temperature ( T). A later review of Carnot’s findings by Rudolf Clausius introduced a new thermodynamic property that relates the spontaneous heat flow accompanying a process to the temperature at which the process takes place. In 1824, at the age of 28, Nicolas Léonard Sadi Carnot ( Figure 12.7) published the results of an extensive study regarding the efficiency of steam heat engines. Predict the sign of the entropy change for chemical and physical processes.Explain the relationship between entropy and the number of microstates.There might be decreases in freedom in the rest of the universe, but the sum of the increase and decrease must result in a net increase.By the end of this section, you will be able to: The freedom in that part of the universe may increase with no change in the freedom of the rest of the universe. Statistical Entropy - Mass, Energy, and Freedom The energy or the mass of a part of the universe may increase or decrease, but only if there is a corresponding decrease or increase somewhere else in the universe.Qualitatively, entropy is simply a measure how much the energy of atoms and molecules become more spread out in a process and can be defined in terms of statistical probabilities of a system or in terms of the other thermodynamic quantities. Statistical Entropy Entropy is a state function that is often erroneously referred to as the 'state of disorder' of a system.Phase Change, gas expansions, dilution, colligative properties and osmosis. Simple Entropy Changes - Examples Several Examples are given to demonstrate how the statistical definition of entropy and the 2nd law can be applied.A microstate is one of the huge number of different accessible arrangements of the molecules' motional energy* for a particular macrostate. Instead, they are two very different ways of looking at a system. Microstates Dictionaries define “macro” as large and “micro” as very small but a macrostate and a microstate in thermodynamics aren't just definitions of big and little sizes of chemical systems.“Disorder” was the consequence, to Boltzmann, of an initial “order” not - as is obvious today - of what can only be called a “prior, lesser but still humanly-unimaginable, large number of accessible microstate it was his surprisingly simplistic conclusion: if the final state is random, the initial system must have been the opposite, i.e., ordered. ‘Disorder’ in Thermodynamic Entropy Boltzmann’s sense of “increased randomness” as a criterion of the final equilibrium state for a system compared to initial conditions was not wrong.

Entropy is also the subject of the Second and Third laws of thermodynamics, which describe the changes in entropy of the universe with respect to the system and surroundings, and the entropy of substances, respectively.

\)Įntropy is a state function that is often erroneously referred to as the 'state of disorder' of a system.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed